Holotron-12B

March 16, 2026

Introducing Holotron-12B - A High Throughput Model for the Age of Computer Use Agents

We're thrilled to release Holotron-12B, a multimodal computer-use model from H Company. Post-trained from the open NVIDIA Nemotron-Nano-2 VL model on H Company’s proprietary data mixture, Holotron-12B is the result of a close collaboration between our research labs to engineer a new type of model optimized primarily for scale and performance in production.

H Company is part of the NVIDIA Inception Program.

The model is now available on Hugging Face.

Why We Built Holotron-12B

Most multimodal models today optimize primarily for static vision or following instructions. Holotron-12B, just like our Holo2 model, however, has a different goal: serving as a policy model for computer-use agents that must perceive, decide, and act efficiently in interactive environments.

With Holotron-12B, we wanted to create a model that could efficiently and effectively scale in production while handling long contexts with multiple images, and still perform well on agent benchmarks. The NVIDIA Nemotron model offered a strong foundation on the inference side, and by developing Holotron-12B we've demonstrated how much more the model can accomplish with further training.

High Throughput Inference with a Hybrid SSM Architecture

Holotron-12B's significant leap in inference efficiency is made possible by its foundational Nemotron architecture, which utilizes a hybrid State-Space Model (SSM) and attention mechanism. Unlike purely transformer-based models, this design is optimized for high-throughput serving. State-space models offer superior scalability for long-context inference by avoiding the quadratic computation cost associated with the full attention mechanism, particularly benefiting agentic workloads involving multiple images and lengthy interaction histories. In terms of inference, the main contribution of an SSM is its dramatically reduced memory-footprint: while vanilla attention stores K and V activations per token and layer (the notorious KV Cache), SSMs are a linear recurrent model, storing only a constant state per layer per generated sequence, independent of the length of the sequence.

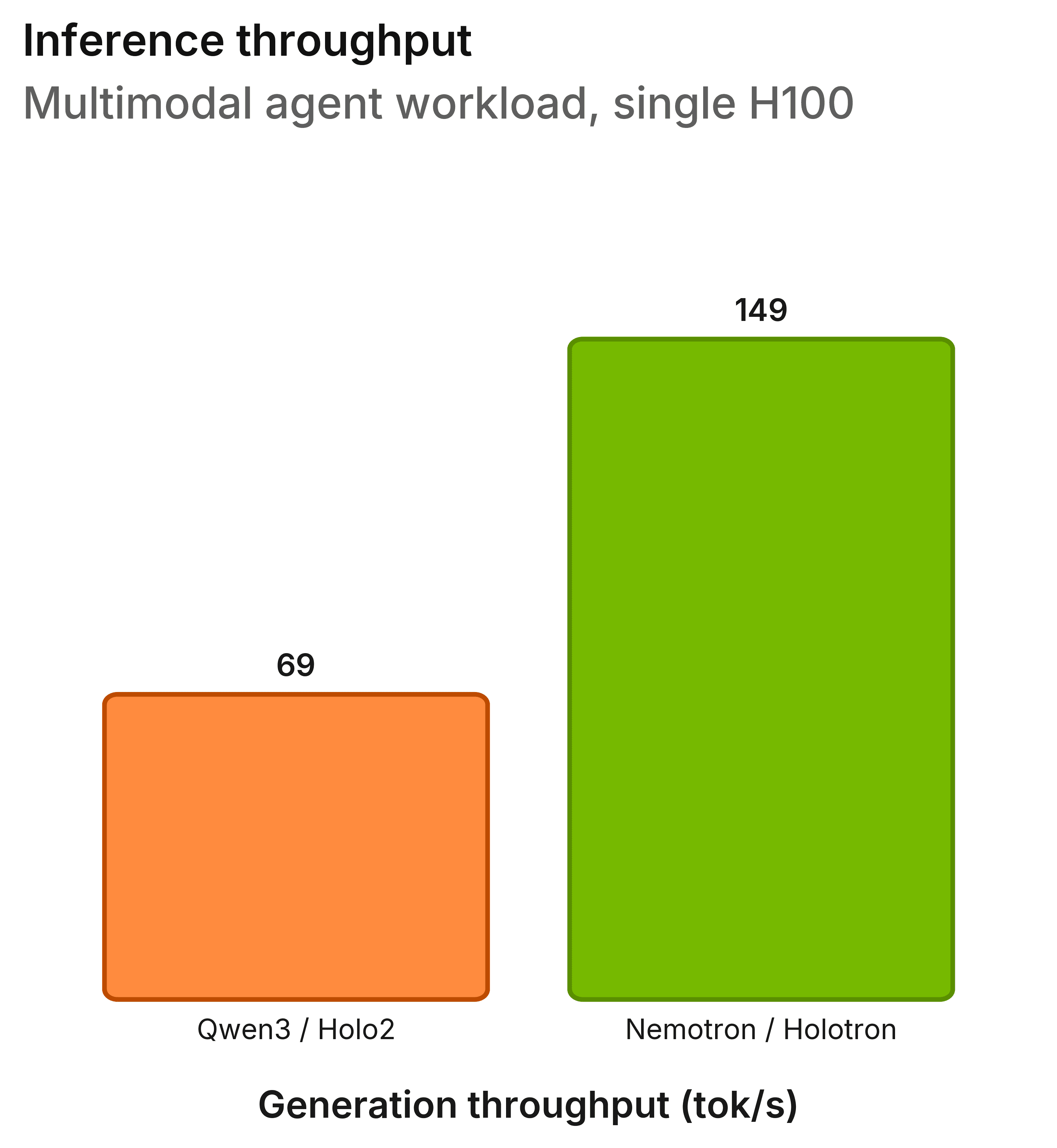

When evaluated on the WebVoyager Benchmark, the model excels using a real-world multimodal agentic workload featuring long context, multiple high-resolution images, and a high request concurrency of 100 benchmark workers. Running on a single H100 GPU and using vLLM with the latest SSM optimizations (v0.14.1), Holotron-12B achieved an over 2x higher throughput compared to Holo2-8B. This makes Holotron-12B an attractive choice for throughput-bound workloads, such as data generation, annotation, and online reinforcement learning.

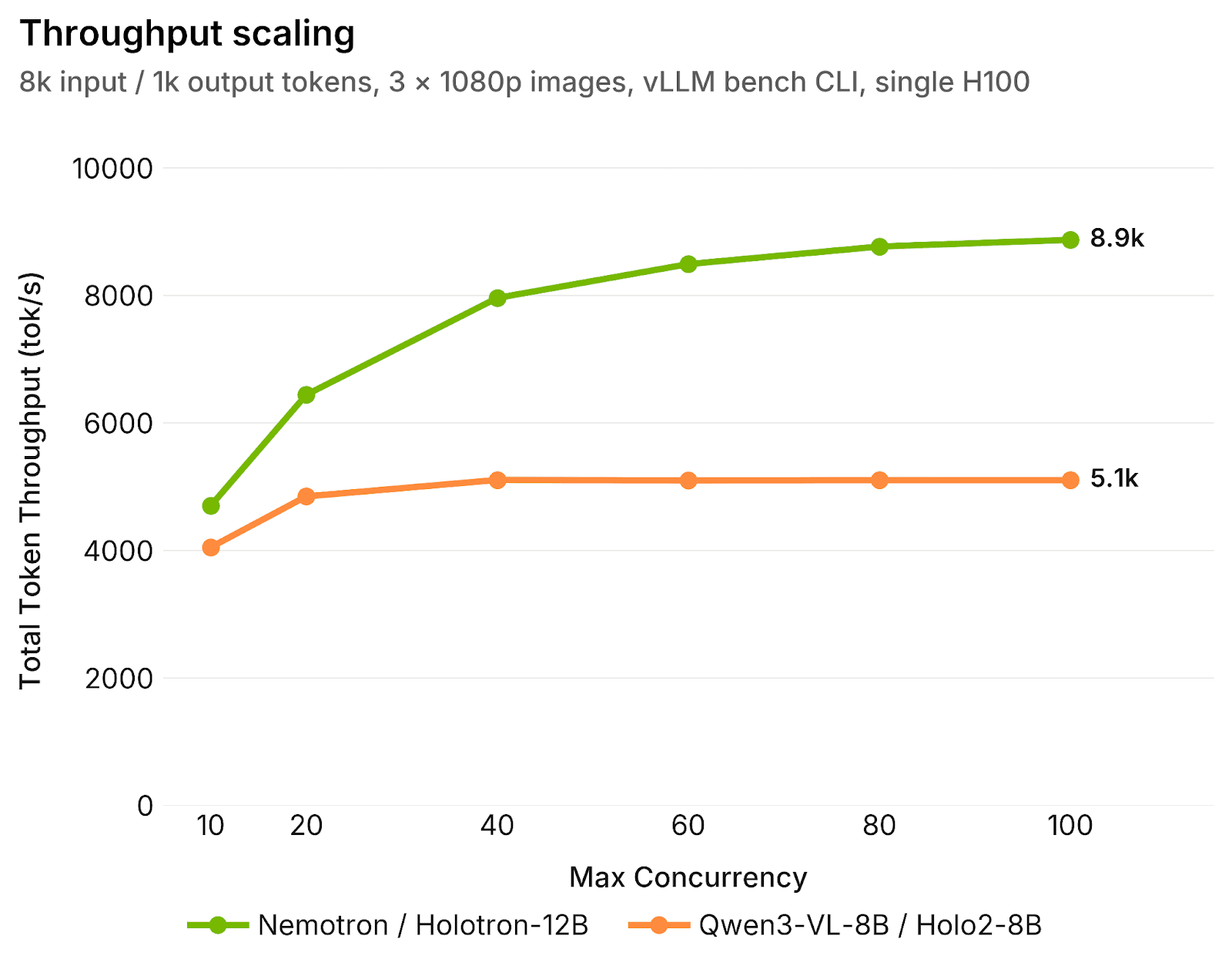

In a controlled experiment setup (see figure 2), Holotron-12B continues to scale efficiently as concurrency increases, with total token throughput rising steadily to 8.9k tokens/s at a max concurrency of 100. In contrast, the total token throughput of Holo2-8B plateaus much more quickly at 5.1k tokens/s. This behaviour highlights a key strength of the Nemotron architecture, namely more effective and efficient VRAM utilization, and smaller overall memory footprint, which allows much larger effective batch sizes on the same hardware. Even at large batch sizes, Holotron-12B maintains strong throughput.

Training and Evaluating Holotron-12B

Holotron-12B was trained in two stages. We started from Nemotron-Nano-12B-v2-VL-BF16, a multimodal base model published by NVIDIA. We then performed supervised fine-tuning on H Company’s proprietary localization and navigation data mixture, focusing on screen understanding, grounding, and UI-level interactions.

The final checkpoint was trained on approximately 14 billion tokens.

Agent Benchmarks

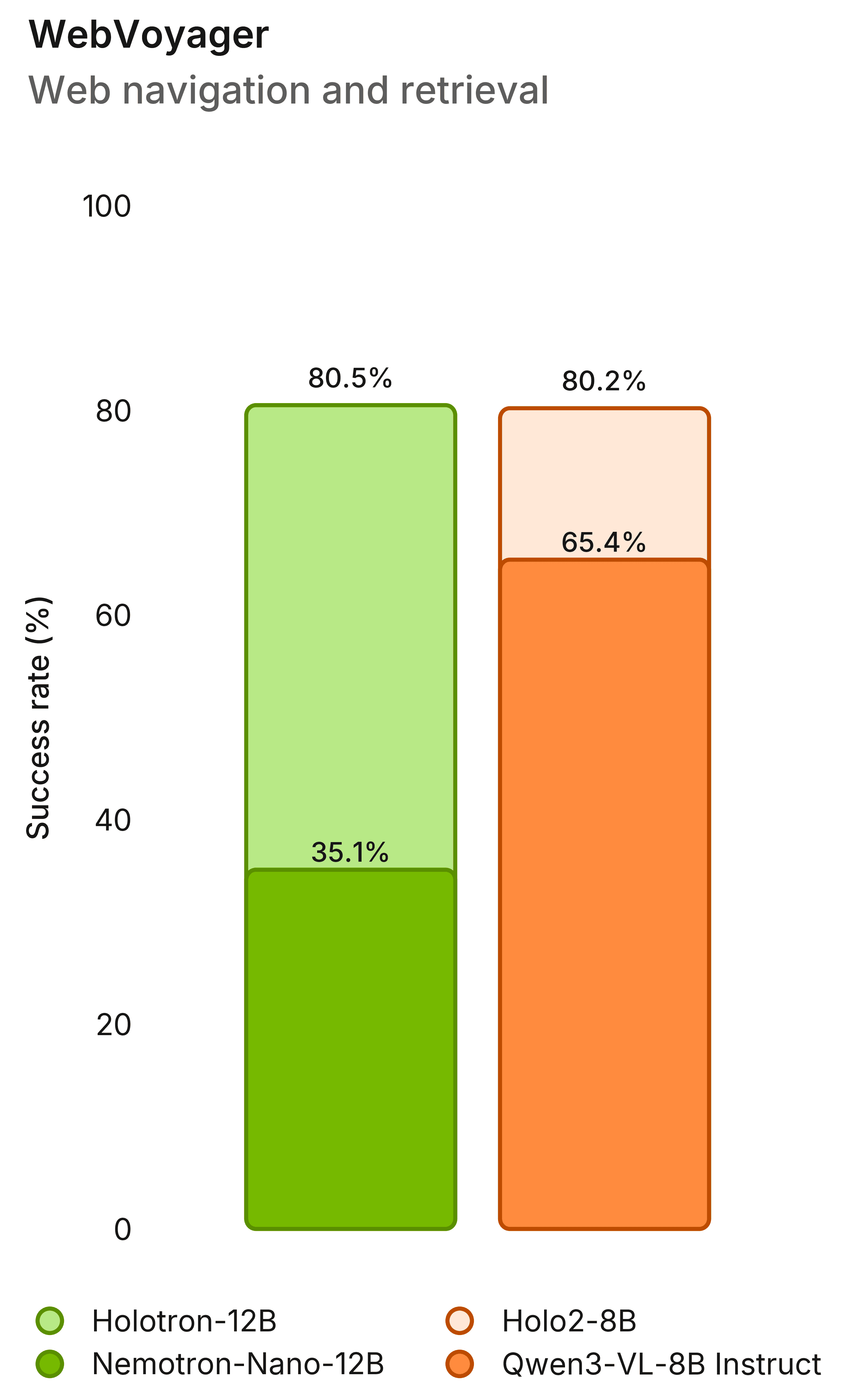

On computer-use and navigation benchmarks, Holotron-12B shows strong improvements over the Nemotron base model and strong performance with established agent models. Its WebVoyager performance increased from 35.1% to 80.5%, exceeding Holo2-8B’s performance on the benchmark and illustrating the model’s ability to perform effectively in an agentic setting.

Localization Benchmarks

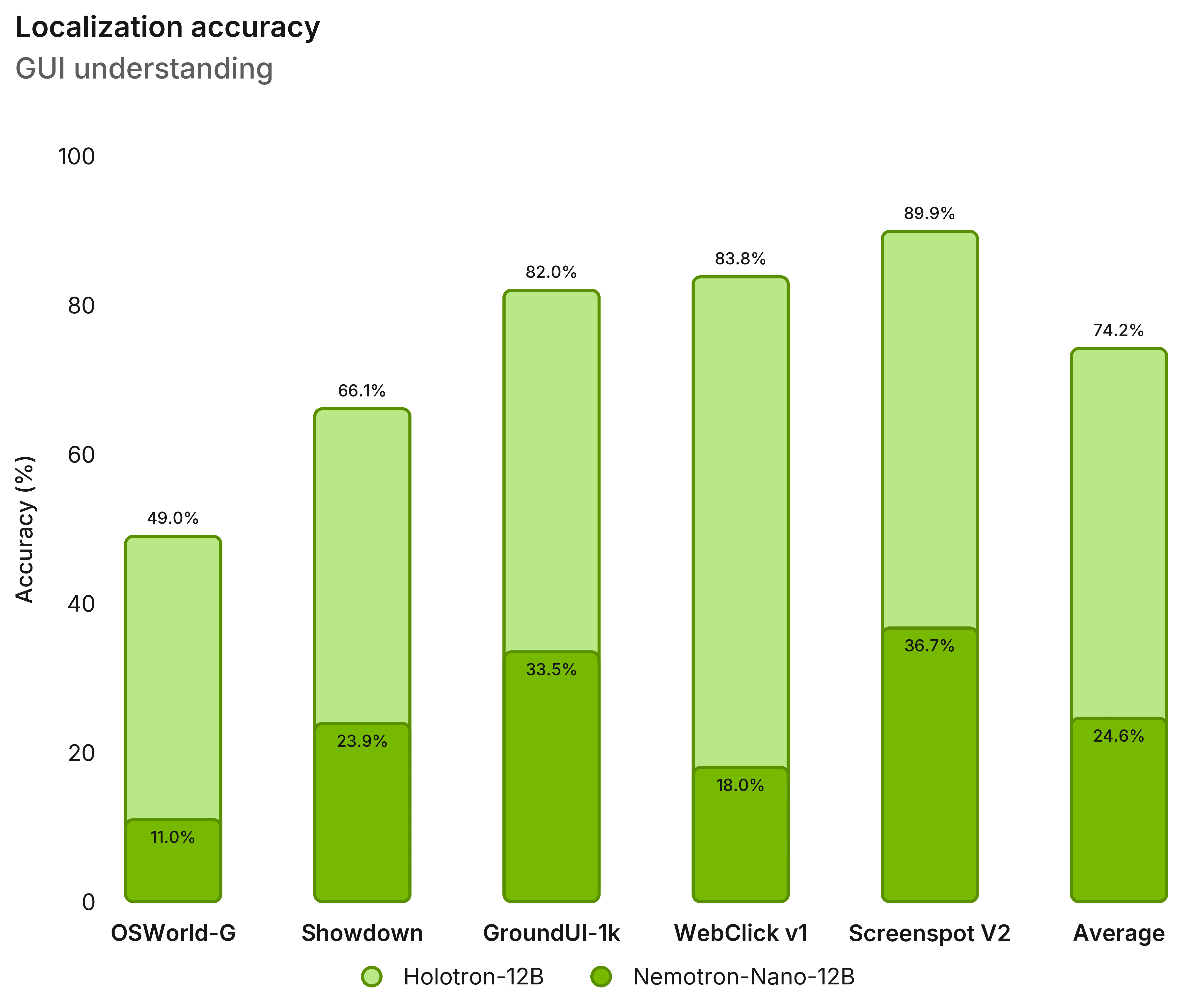

Holotron-12B also improves substantially over the base Nemotron model on localization and grounding benchmarks such as OS-World-G, GroundUI, and WebClick.

Conclusion

Holotron-12B demonstrates that the NVIDIA Nemotron VL model provides a strong foundation for real-world multimodal agents when paired with the right training setup and infrastructure work.

The model offers strong agent performance, significantly improved inference throughput, and a clear path for future improvements, particularly around higher-resolution vision training.

We look forward to seeing what others build with Holotron-12B. The model and checkpoints are available now on Hugging Face under an NVIDIA Open Model License.

What’s next: Scaling the Future of Agentic Intelligence with Nemotron 3 Omni

NVIDIA announced today the release of Nemotron 3 Omni. Building on the success of Holotron-12B, we are preparing to post-train this next generation of multimodal models. By leveraging the enhanced hybrid SSM-Attention and MoE architectural foundations of the Nemotron 3 family, we aim to deliver even greater leaps in reasoning capabilities and multimodal precision with the newly announced Nemotron 3 Omni. As this evolution pushes Holotron beyond research and into a commercial application, it will provide enterprises with the high-throughput, low-latency performance required for massive-scale autonomous "computer use" deployments.